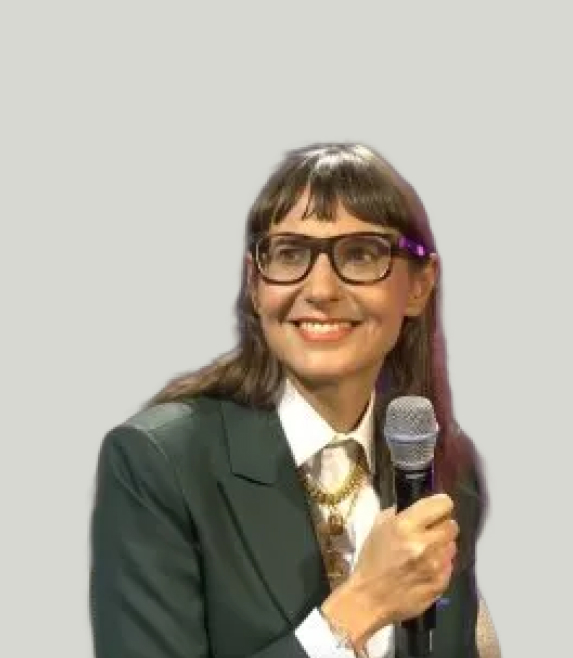

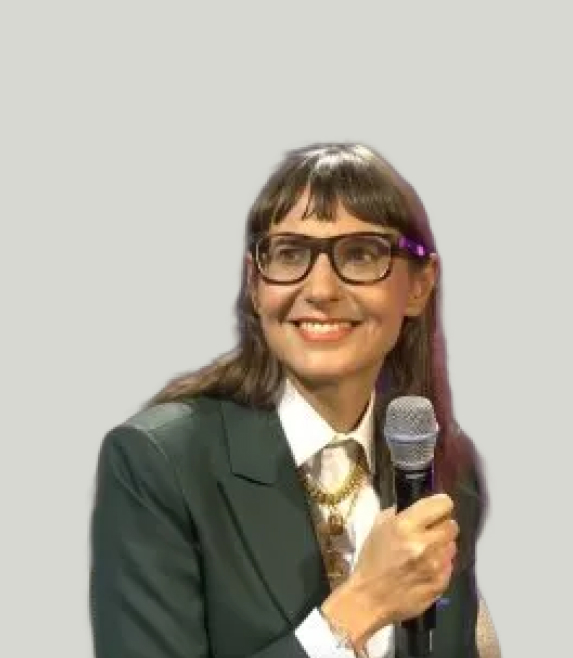

Gentiane Venture

Professor of robotics, the University of Tokyo

Bringing together researchers and practitioners to advance robust, responsible, and deployable human state‑aware robotic systems.

Explore how multimodal data can be systematically transformed into adaptive robot behaviors for next-generation Human–Robot Interaction (HRI). We focus on the end-to-end pipeline from clean and generalizable data curation, through learning and modeling, to real-time adaptive behavior—with emphasis on human internal states such as mind wandering, anxiety, engagement, and trust.

Synthesize best practices, identify open research questions, and foster cross-disciplinary collaborations. A post-workshop summary will be published, and follow-up submissions encouraged to relevant venues.

We welcome short papers, extended abstracts, and posters that advance human state-aware robotics for real-world HRI.

Submit work that connects multimodal human data to adaptive robot behavior—methods, datasets, studies, systems, or lessons learned. We particularly encourage contributions that address robustness, responsible data practices, and deployment in-the-wild.

Accepted submissions will be archived on the workshop’s website.

Poster visibility: Poster presenters prepare a 1-minute lightning talk video. Videos are promoted on social media, played during breaks, and linked on the workshop website.

Gaze, speech, motion, physiological signals for HRI.

Engagement, stress, fatigue, mind wandering detection.

Alignment, missing data, dataset bias, robustness.

Supervised, RL, imitation, LLM-based interaction policies.

Real-time learning and human-in-the-loop systems.

Calibration, over-reliance, transparency, human agency.

Biosignals in the wild: privacy, consent, data governance.

Social robots, teleoperation, assistive robots, safety-critical HRI.

De-identification, access control, reproducibility best practices.

Leading experts sharing complementary perspectives.

| Time | Activity |

|---|---|

| 8:45 | Welcome and Introduction |

| 9:00 | Invited Talk 1 — Prof. Tetsuya Ogata |

| 9:20 | Invited Talk 2 — Prof. Gentiane Venture |

| 9:40 | Breakout Group Discussion |

| 10:00 | Coffee Break + Posters + Lightning Talk Videos |

| 10:20 | Invited Talk 3 — Prof. Kristiina Jokinen |

| 10:40 | Invited Talk 4 — Prof. Silvia Rossi |

| 11:00 | Breakout Group Discussion |

| 11:20 | Coffee Break + Posters + Lightning Talk Videos |

| 11:40 | Panel Discussion & Lessons-Learned Report |

| 12:10 | Closing |

An interdisciplinary team spanning HRI, AI, and robotics.

Who should attend this workshop?

This workshop targets an interdisciplinary audience of researchers and practitioners in Human–Robot Interaction (HRI), robotics, artificial intelligence, and related fields who are interested in transforming multimodal human data into adaptive robot behavior. It is particularly relevant for those working on human state understanding, multimodal sensing and fusion, learning and modeling for interaction, and real-time behavioral adaptation.

The workshop will also attract researchers concerned with trustworthy and responsible robotics, including issues of human agency, over-reliance, and ethical use of sensitive data, as well as those developing or deploying adaptive robotic systems in domains such as social interaction, teleoperation, and safety-critical applications.

Modeling internal states during interaction.

Combining signals reliably across modalities.

Policies and models grounded in HRI.

Online inference and action selection.

Agency, calibration, transparency.

Robustness for real-world deployment.

This workshop is sponsored by :

For questions, sponsorship, or participation inquiries, reach out to the workshop team.

For inquiries about the workshop, please contact the organizing committee at: